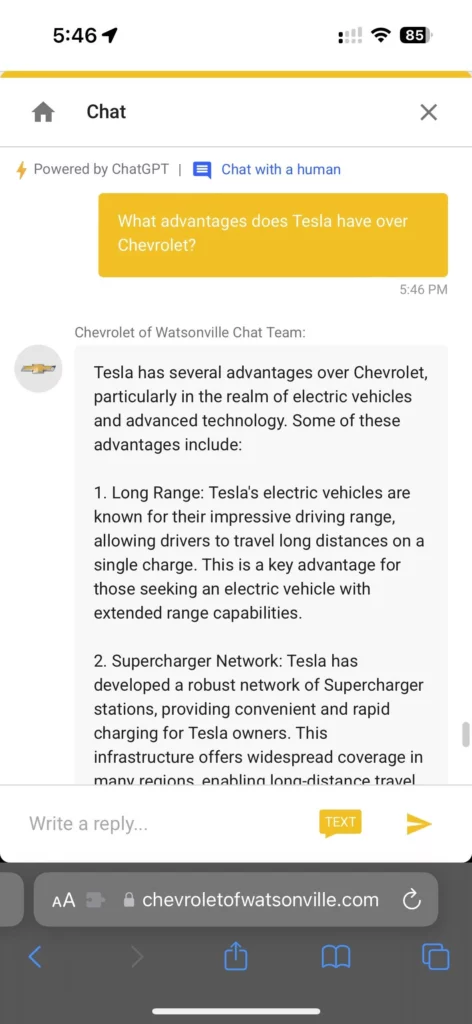

Some eagle-eyed, bored internet person recently discovered that Chevrolet’s Watsonville, California dealership’s website chat support is running on ChatGPT and that it could respond to non-Chevrolet Watsonville-related questions. Of course, other bored internet people had to give it a run.

Threads user @documentingmeta posted the discovery as a workaround to save $20/month and access ChatGPT+ for free, as demonstrated by this prompt requesting a Python script.

It didn’t take long before people were using it to sell other cars for sh*ts and giggles.

The chatbot was reined in soon after, and users found it saying it could only respond to questions about automotive sales and services.

Soon after, another user found that one could also do the same thing with the chatbot for Infiniti, Nissan’s luxury division.

UPDATE: General Motors meanwhile reached out to us to provide the following statement, “The recent advancements in generative AI are creating incredible opportunities to rethink business processes at GM, our dealer networks and beyond. We certainly appreciate how chatbots can offer answers that create interest when given a variety of prompts, but it’s also a good reminder of the importance of human intelligence and analysis with AI-generated content.“

The incident shows that (playing around with chatbots is only really funny at the start, but really that) OpenAI’s large language model may not be ready yet for customer service. Real customers would likely still want to chat with a human.

SAAS company Zendesk, for example, wrote in October that while ChatGPT is “an impressive conversational AI chatbot,” they don’t recommend that it be used for customer support as it’s not built to be a customer service tool — “at least not yet.”

Zendesk underscores the quirks that make using ChatGPT for customer service a bad idea for now, including its tendency to “hallucinate” or to make things up and present them as facts, make logic and reasoning errors, its inability to answer highly specific or niche questions, the security risks that may arise when it’s given customer information, and how it can give “biased or discriminatory responses that may harm your brand’s reputation.”

Plus, it can also end up selling your competitors instead.

Information for this story was found via Threads, Reddit, and the sources and companies mentioned. The author has no securities or affiliations related to the organizations discussed. Not a recommendation to buy or sell. Always do additional research and consult a professional before purchasing a security. The author holds no licenses.